Main Menu

- Home

- Product Finder

- Calibration Systems

- Calibration Services

- Digital Sensing

- Industrial Vibration Calibration

- Modal and Vibration Testing

- Non-Destructive Testing

- Sound & Vibration Rental Program

- Learn

- About Us

- Contact Us

In the Test and Measurement Industry, we are constantly worried about making sure that the measurement we take is as close a representation as possible to the actual phenomena being characterized. When trying to minimize measurement errors, warm-up time and thermal stability effects in our equipment often become an afterthought. Testing issues, such as transducer mounting, cabling, and equipment set-up (all of which will cause much more noticeable errors in the measurement), typically occupy our minds. So why, with all of the technological advances that we have in electronics, do we even need to worry about letting the equipment warm up, or check the thermal stability? Because we want to operate our equipment in as stable a state possible to minimize our measurement uncertainty.

The warm-up time of measurement equipment is driven by the settling time of all of the precision components in the particular piece of equipment being used. The settling time includes the effects of the temperature and power coefficients in precision

resistors, as well as other components, such as precision voltage references, analog to digital converters, digital to analog converters, etc. Other effects also factor into the equipment warm-up time, such as the same power and temperature coefficients

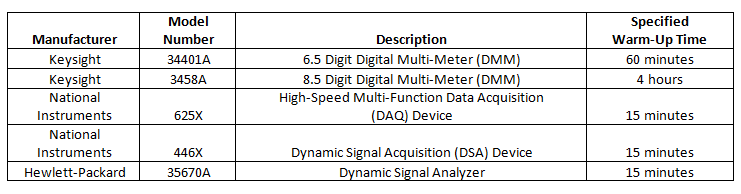

of the power supply for the equipment, the charging time constant of filter or bias circuits (more prevalent in transducers), etc. Table 1 shows the specified warm-up time for several common pieces of measurement equipment.

Table 1: Specified Equipment Warm-Up Times

In order to eliminate the need to worry about warm-up time, many laboratories will leave their equipment on all of the time. This works fine in a laboratory setting, but for field testing, or other specific situations, leaving the equipment powered is not always practical or possible. Unfortunately, warm-up time is only part of the thermal consideration that we need to worry about when taking a measurement. We also need to worry about the thermal stability of our equipment. The thermal stability is based on the temperature coefficient for specific functions of the equipment in use (and the power coefficient if applicable). The factors included in the temperature coefficient of a piece of equipment are typically the gain drift and the offset drift. For the National Instruments 625X series DAQ devices, the absolute accuracy table for the analog output is shown in Table 2.

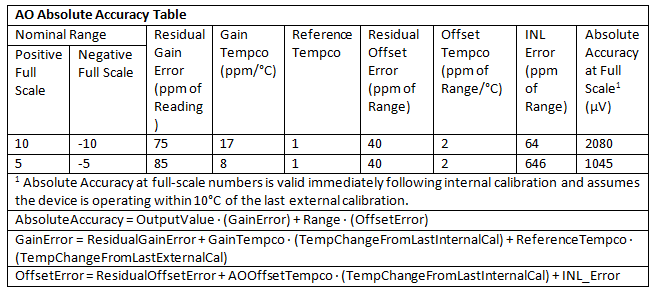

Table 2: AO Absolute Accuracy (NI 625X DAQ Devices)

In the table, we can see that the gain temperature coefficient and offset coefficient are both clearly specified in ppm/°C and the formulas to calculate the error are shown at the bottom of the table. Fortunately, many equipment manufacturers include the ability to perform internal calibrations to automatically account for the thermal stability of the equipment. If the manufacturer has built the internal calibration ability into their device, the factors that dictate when to perform an internal calibration are also stated on the spec sheet. For example, the NI 446X DSA devices specify a yearly interval for the external calibration period, but recommend performing an internal calibration anytime the ambient temperature differs by more than ±5 °C from the temperature at the time of the external calibration.

In our ever-present efforts to make the most accurate measurements possible, it can be easy to forget about the thermal characteristics of the equipment we are using to take our data. Even if your measurement does not need to be so accurate as to account for the temperature coefficients of the equipment, allowing the proper amount of time for the equipment to warm up will at least allow the equipment to stabilize before starting a test.